The Software Industry Has a Dirty Secret About QA. It's Time to Say It Out Loud.

This is a long read. Intentionally.

I have worked as a Freelance QA Leader across many companies. Different industries. Different team sizes. Different tech stacks. But the same patterns kept showing up in companies that never treated QA as an independent function. Companies struggling with quality not because of bad engineers, but because they never learned how to treat QA properly. This article is everything I saw. And the future I am about to see.

The Day 1 Demotivation.

Most freshers who get placed into QA don't stay quiet about it.

They send emails to HR within weeks. Requesting a switch to development. Or DevOps. Or infrastructure. Or anything else.

Just not QA.

And the reason is not complicated.

From day one, they watch how other teams talk about QA. The tone. The eye rolls. The offhand comments in standups. Nobody says it directly. They don't have to.

The message lands clearly:

QA is where careers go to stall. Development is where the real engineers are.

And then there is the other message. The one developers send without even realizing it.

Developers treat QA as the easiest job in the room.

Not a discipline. Not an engineering function. A checkbox. Something a less technical person handles while the real work happens elsewhere. A role you hand off so you can get back to building.

That attitude travels fast. Freshers absorb it on day one.

No senior engineer pulls a fresher aside and says "QA is where you will learn how systems truly fail." No team lead talks about QA as the foundation for understanding complex software at its edges.

Instead, QA is treated as a waiting room. Something you escape from. Not something you grow in.

Freshers are perceptive. They watch how QA engineers are treated by every team around them. The low recognition. The last-minute pressure. The suggestions ignored. The overtime that is simply expected without question. And they make a rational decision.

They want out before they are fully in.

This is not a perception problem that QA needs to fix with better branding. This is the industry's failure. Earned over decades.

Every company says "quality matters."

Every engineering values document has it. Every all-hands meeting mentions it. Every job description lists it.

And yet the people responsible for quality have never been treated like they matter.

This is why freshers flee QA. Not because they don't understand it. Because they understand it perfectly.

The Meeting Nobody Invited QA To. The Deadline QA Still Has to Meet.

Architecture is decided without QA. Tech stack is chosen without QA. Delivery timelines are committed without QA.

Entire systems are designed and built before anyone asks the most basic question:

How are we going to test this?

Then, when development is nearly done and the timeline is already collapsing, someone finally says: "QA can start testing."

At that moment, QA inherits everything. The fixed architecture. The rushed design. The unrealistic deadline. Every shortcut taken in the name of speed. Every corner cut to hit a date.

And the expectation remains unchanged: QA must guarantee quality.

QA is handed the consequences of every decision they were never invited to make.

This is not engineering. This is organizational hypocrisy.

Here is the pattern every QA engineer recognizes.

Development delays during the sprint. Stories slip. Code arrives late in the evening. The pressure shifts entirely onto QA.

Developer finishes at 6pm. QA stays until 9pm. Sprint is delayed. QA works the weekend. Release is tomorrow. QA works through the night.

Development delays become QA overtime. Every single sprint. Every single release.

Nobody asks why development was not managed better. Nobody examines why the QA window keeps shrinking sprint after sprint. The velocity metrics stay clean on paper. The pressure just moves. Always in one direction. Always onto QA.

And when something breaks in production, the question never changes:

"Why didn't QA catch this?"

Nobody asks why the developer's delay became QA's emergency. The sprint looks clean. Only QA feels the damage.

The Thing Nobody Says Out Loud: Most Developers Don't Test Their Own Work.

Most developers do not test the positive flows of their own work before pushing to QA.

Some run it locally. On their own machine, with their own test data, under ideal conditions that reflect nothing close to the real world. Then they mark the story done and move on.

And that is the generous version.

Some don't even do that. They push after compilation. The build succeeded. That's enough. Off to QA it goes.

Compilation is not testing.

A successful build proves the code is syntactically valid and nothing more. It says nothing about whether the feature works. Nothing about whether the UI renders correctly. Nothing about whether the user can actually complete the task the ticket described.

QA engineers know this pattern intimately.

A story arrives in QA. Within five minutes of running the most basic happy-path flow, the exact scenario the ticket was written for, it fails. Not an edge case. Not an integration issue. Not a performance problem under load. The basic functionality does not work.

That is not a QA finding. That is a development failure that was shipped across the line.

Testing positive flows is not QA's responsibility. It is the developer's responsibility.

QA exists to find what is genuinely hard to find. Edge cases. Integration failures. Security vulnerabilities. Race conditions. System behavior under real-world stress and unexpected inputs.

QA does not exist to discover that a button built this sprint does not work.

When a basic flow fails in QA, the clock that matters is not the QA clock. It is the development clock. The story was never ready. It was never done.

The recognition problem.

When a QA engineer finds a critical defect that would have caused a production outage, it becomes a ticket in the backlog.

When a developer fixes that same defect, it becomes an engineering success story shared in the team channel.

Finding the bug: routine work. Fixing the bug: celebrated achievement.

The engineer who prevents the fire gets no mention. The engineer who puts it out gets the applause.

The people who save the product quietly are never the ones who get remembered.

Responsibility without authority.

QA is held accountable for quality outcomes.

But QA doesn't choose the architecture. QA doesn't define development practices. QA doesn't control the timeline. QA doesn't decide what "done" means. QA doesn't set the requirements. QA doesn't influence the decisions that determine whether quality is even achievable.

You cannot hold someone responsible for an outcome they were never given the power to influence.

Imagine asking a building inspector to guarantee structural safety after the building is already constructed, the materials are fixed, and the workers have left.

That is exactly what the software industry does to QA. Every single release.

QA Has Been Asking for the Same Things for Years. The Answer Is Always the Same.

Better UI standards so element identifiers are stable and selectors don't break on every release. Better logging so defect investigation doesn't become hours of archaeology through unstructured noise. Stable APIs so automation doesn't break silently every time an undocumented change goes out. Clear contracts between services so integration testing isn't built on guesswork and assumption. Testable architecture so automation can actually scale instead of collapsing under its own brittleness. Consistent naming conventions so QA doesn't maintain a mental translation layer across every layer of the system.

These are not QA preferences. They are not testing team wishlist items. They are basic engineering standards that make the entire product more reliable, observable, and maintainable.

Every senior developer already knows this.

And yet these suggestions are quietly deprioritized sprint after sprint because implementing them takes time, and that time shows up as a charge against feature delivery.

So QA is expected to figure out testing later. Automation stays brittle. Coverage stays shallow. And every quarter someone asks why the regression suite keeps failing.

Brittle automation is not a QA failure. It is the direct consequence of building systems that were never designed to be tested.

Same Company. Same Manager. Same VP. Zero Independence.

There is a contrast inside the industry that rarely gets discussed openly.

QA teams that operate outside development hierarchies often have genuine authority.

Independent QA organizations. Third-party testing companies. Testing teams in separate vendor relationships.

These teams are harder to ignore. Their mandate is clear and structurally protected. Their job is to find problems. Nobody in the delivery chain can quietly override their findings or reshape their conclusions before they reach leadership.

When development happens at one company and QA happens at another, as in many enterprise software procurement arrangements, the testing team carries real weight.

They are not subject to the same performance review system. Their leadership does not report to the same VP. Their findings cannot be softened in a private one-on-one conversation before the report goes out.

Nobody above them has a personal interest in making the results look better than they are.

That is what structural independence looks like. And that independence is precisely what gives their findings credibility.

Now look at what happens when QA sits inside the same company, under the same development leadership, in the same agile team, with the same manager controlling performance ratings and promotions.

That independence disappears entirely.

QA becomes another delivery function.

The QA engineer is no longer someone whose job is to protect the product and the customer. They become someone whose job is to help the team hit sprint targets.

The title says quality. The incentive structure says don't slow us down.

This is why so many QA engineers inside embedded agile teams carry the quiet frustration of knowing what the right answer is and having no structural mechanism to make it heard.

The problem is not their skill. The problem is not their judgment.

The problem is that the organization was never designed to listen to them.

QA works for the company. Not for the leader above them.

QA exists to protect the product, the company, and the user. Not to make sprints look clean. Not to keep velocity metrics comfortable. Not to avoid raising concerns when a deadline is approaching.

When a QA engineer is pressured to downgrade a defect priority because the release is tomorrow, they are not being asked for a technical judgment. They are being asked to absorb organizational risk on behalf of a leader who will not carry the consequences when that defect reaches production.

When a QA leader receives a lower performance rating for raising too many concerns, their mandate has been quietly redefined. They are no longer being evaluated on quality outcomes. They are being evaluated on compliance with delivery pressure. The job title has not changed. The actual job has.

That is not a QA problem. That is a governance problem.

The companies that understand this build structures that reflect it. They give QA reporting lines that are independent of development leadership. They evaluate QA on quality outcomes, not on how little friction was created. They treat the engineer who finds a critical defect before release as someone who just protected the business.

The companies that don't understand this keep asking why quality is poor, why production incidents keep recurring, and why their best QA engineers keep resigning.

The answer is always the same. You told them through ratings, through how their concerns were received, through who got promoted and who got sidelined, that their real job was to work for the leader. Not for the product. Not for the company.

QA that works only for the leader above them is not quality assurance. It is quality theater.

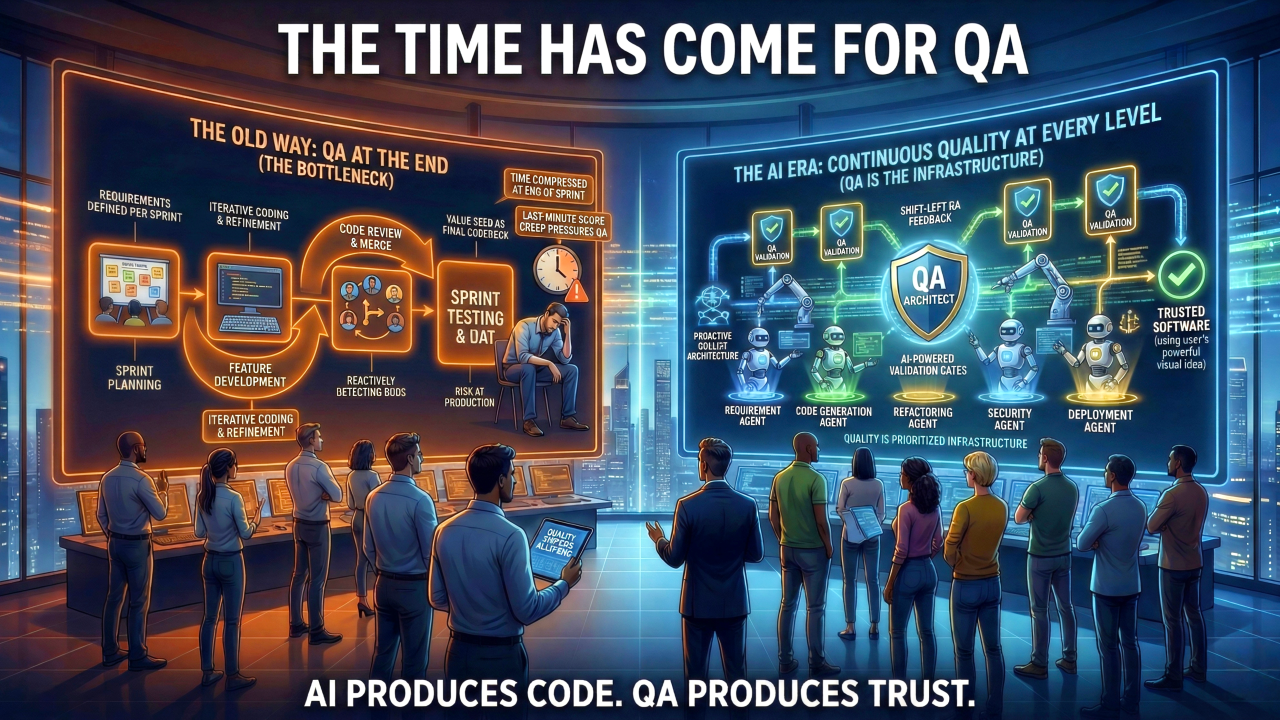

AI Writes the Code Now. Who Validates It?

For years the industry survived with this broken model because development speed had natural limits. Human engineers could only write so much code. Timelines created breathing room, even when that room was misused.

AI removed those limits completely.

Code generation is no longer restricted to developers. Multi-agent workflows can generate, review, and modify code automatically. Product managers, business analysts, founders, anyone who can clearly describe a problem can now trigger software creation without writing a single line of code themselves.

Defects can now be created faster than ever, by more people than ever, with less engineering oversight than ever before.

The old model, where QA manually validates everything at the end of development, collapses immediately in this environment. No QA team on the planet can keep pace with the volume of software that AI-driven development will produce.

Speed without quality systems does not mean faster delivery. It means faster failure at a scale the industry has never seen.

The math never worked. Now it is completely indefensible.

The ideas QA always had are now survival requirements.

Shift-left testing. Testability in architecture. Risk-based validation. Continuous quality signals. Validation embedded into pipelines.

For years these were dismissed as QA topics. Things the testing team worried about. Not real engineering priorities.

Now they are what every engineering team is scrambling to implement under pressure, often without the institutional knowledge that QA teams spent years building.

The industry spent years ignoring what QA was saying. Now it is paying to learn the same lessons, under pressure, at speed.

Quality cannot be inspected into a system at the end. It has to be designed in from the beginning.

QA was never just test execution.

The biggest mistake QA teams made over the years was accepting the role of test executors. Running scripts. Filing tickets. Executing cases written by someone else against software designed by someone else.

That role was always too small. And accepting it allowed the industry to keep pretending that testing and quality were the same thing.

They are not.

The real expertise of QA engineers is not writing test cases.

It is understanding how systems fail.

Edge cases. Risk patterns. Coverage gaps. System behavior under stress. The places where assumptions meet reality and shatter. The scenarios developers never thought to consider. The failure modes that only become visible when someone is actively looking for them.

These are not secondary engineering skills. They are system design skills.

QA engineers don't just find bugs. They understand failure. That is one of the most valuable capabilities an engineering organization can have.

In the AI era, QA should not be asking for a seat at the table.

QA should be designing the table.

The Team That Was Dismissed Is the Team the Industry Now Needs.

The people who understand how to build quality systems have been sitting inside engineering teams all along.

Whose suggestions were quietly deprioritized. Whose concerns were labelled as blockers. Whose overtime was treated as a normal cost of doing business. Who found the defects that saved products from failure and received no recognition for it. Who raised the risk and got a lower rating for it.

They were never the bottleneck. They were the ones holding the line.

They were QA.

In the AI Era, Everyone Becomes the Validator. QA Becomes the Architect.

In the AI era, something fundamental has shifted in how software gets built.

AI is becoming the developer. Everyone else is becoming the validator.

Product managers validate requirements. Developers validate generated code. DevOps validates infrastructure outputs. Business teams validate functionality before it reaches users.

Validation is no longer a QA-only activity. It is the core activity of the entire organization.

And who understands validation better than anyone else in the building?

QA does.

QA engineers have spent years thinking deeply about what it means to verify something properly. How to design coverage that actually matters. How to assess risk before it becomes an incident. How to build systems that catch failures before users encounter them. How to ask the question nobody else is asking: what could break here, and how would we know when it does?

That expertise is not a support function anymore.

In an AI-driven SDLC, QA is the team that designs the quality systems every other team depends on.

Not just test cases. Not just automation scripts.

Quality strategy for the entire development lifecycle.

The standards developers follow when AI generates code at speed. The validation gates DevOps embeds into pipelines. The coverage frameworks product teams use to review requirements before a line of code is written. The risk signals that tell leadership when to slow down and when it is genuinely safe to ship.

QA does not plug into the SDLC anymore. QA architects it.

The freshers who sent those emails to HR asking to be moved out of QA were responding to a version of this role that is already disappearing.

The new version is something the industry has never seen before.

The team that was dismissed as the easiest job in the room is becoming the team that defines how software is built, validated, and trusted in a world where AI writes most of the code.

The time has come for QA. Not because the industry finally decided to be fair. But because the industry can no longer function without what QA knows.

If this reflects your experience, share it. The industry needs to hear it from the people who lived it.

#QualityEngineering #QA #SoftwareTesting #SoftwareEngineering #TechCulture #AIinDevelopment #EngineeringLeadership #QualityAssurance